Authors - Soheil Esmaeilzadeh, Gao Xian Peh, and Angela Xu

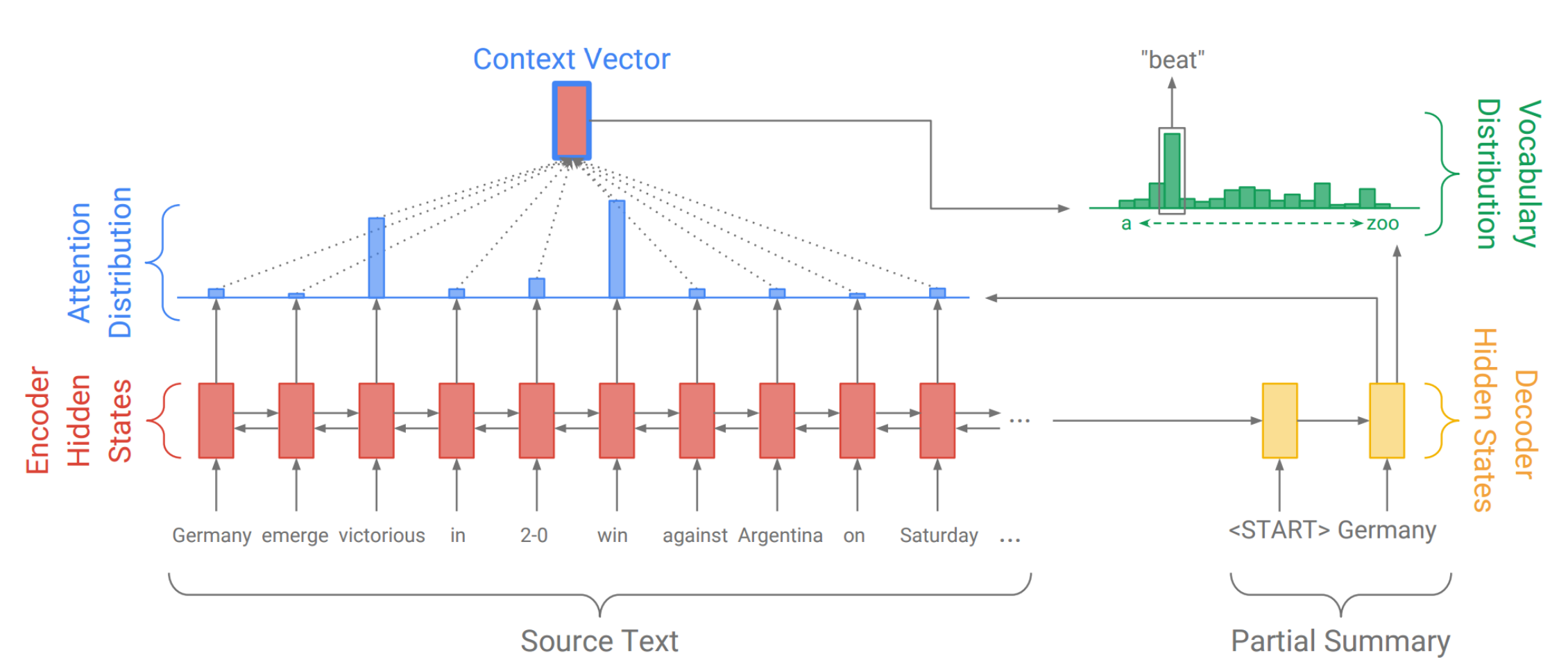

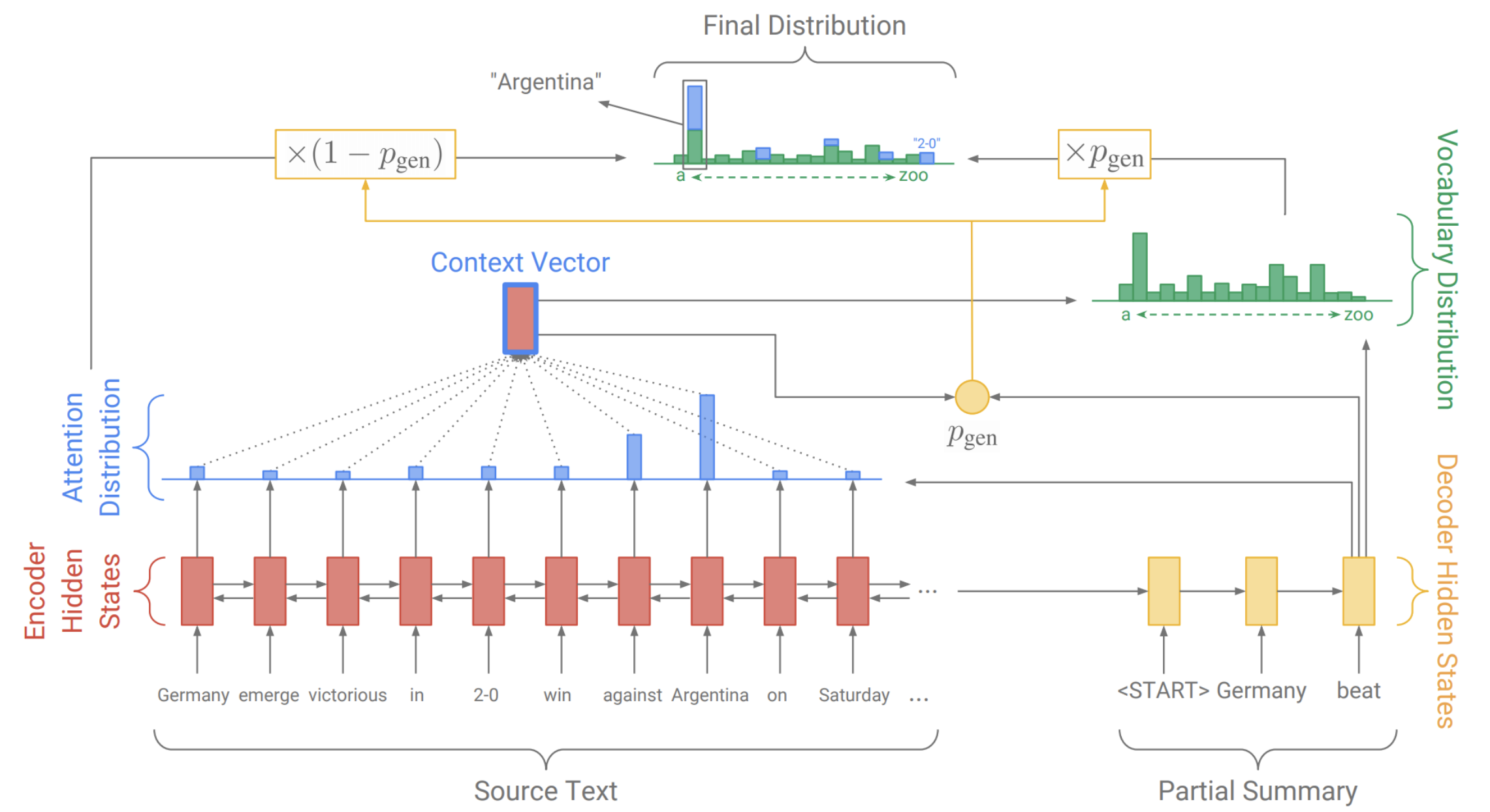

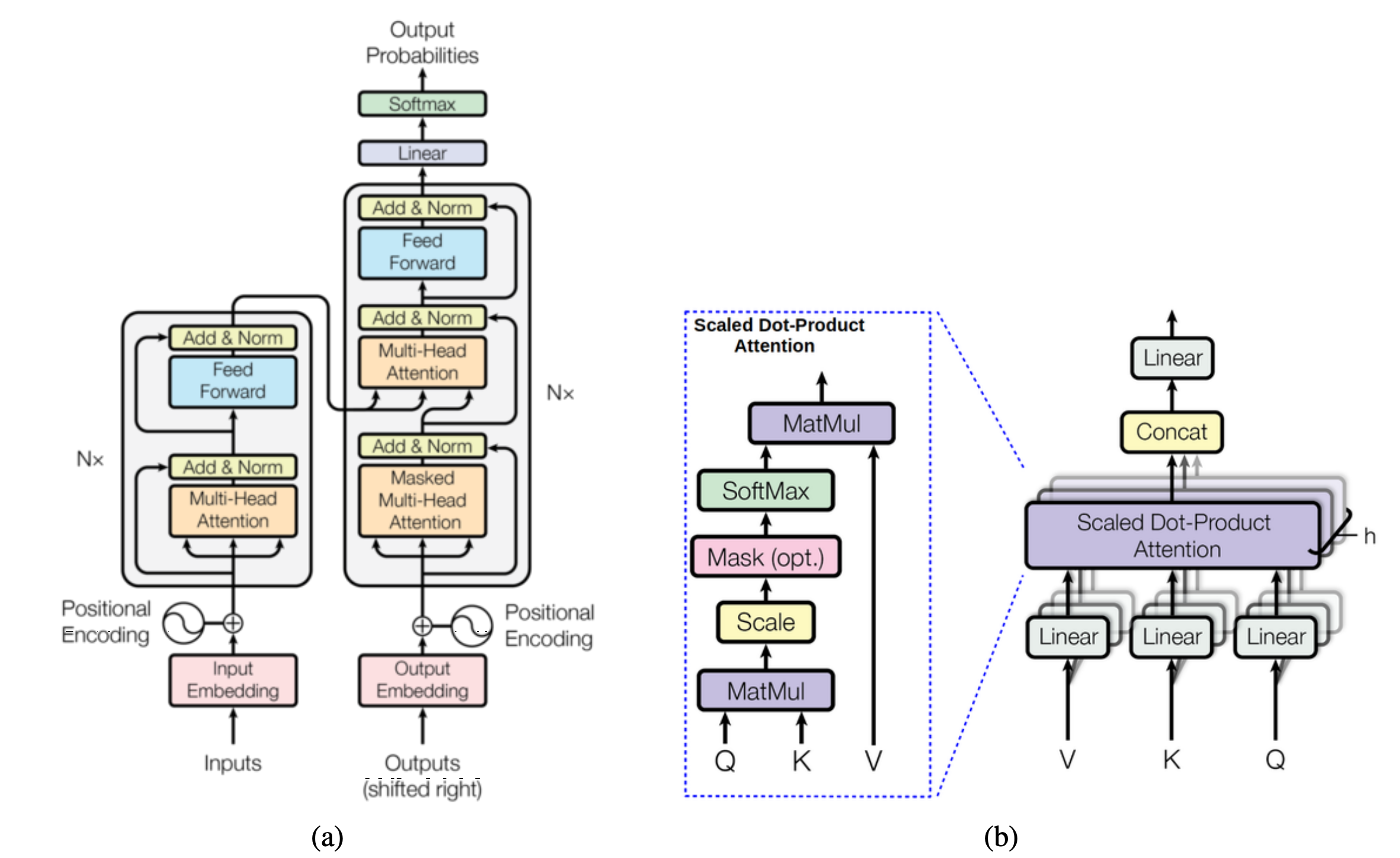

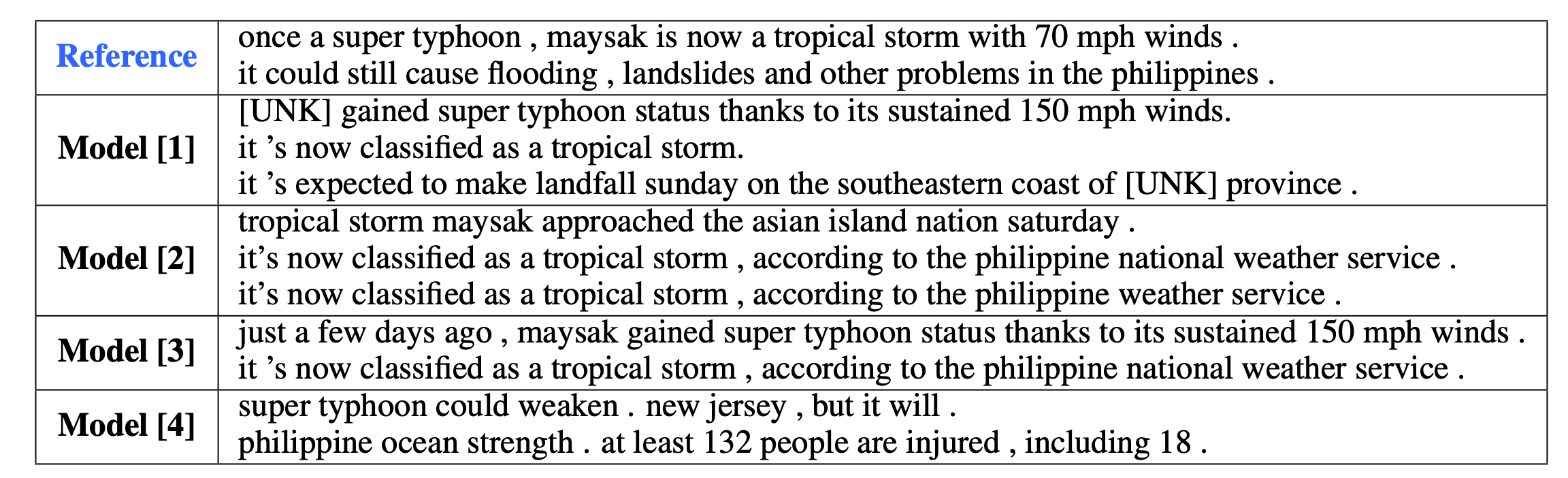

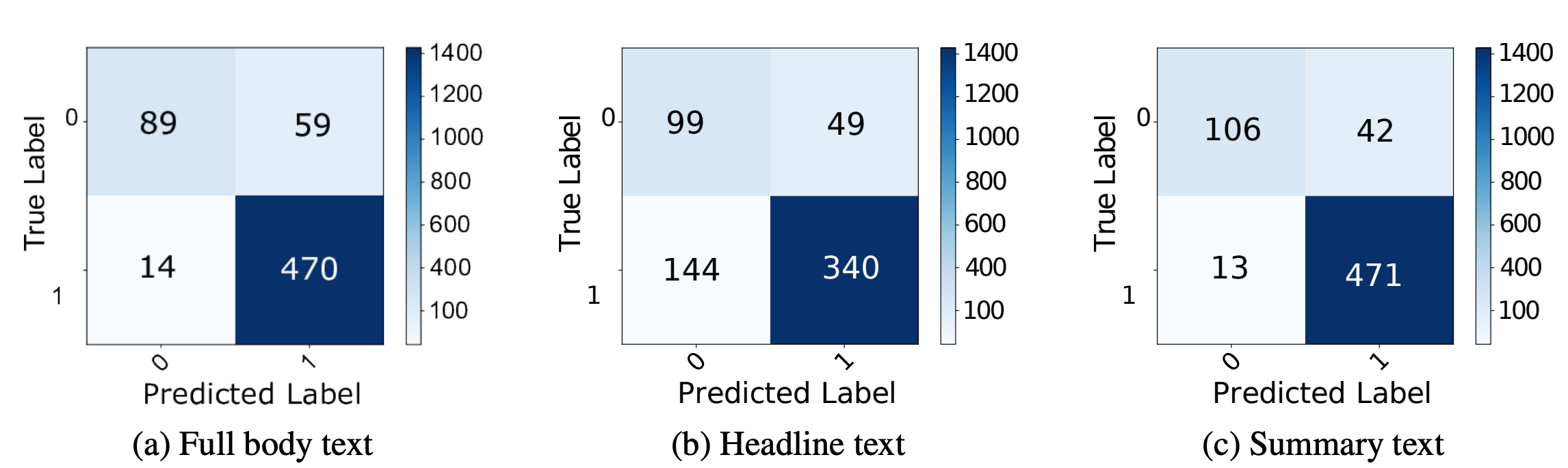

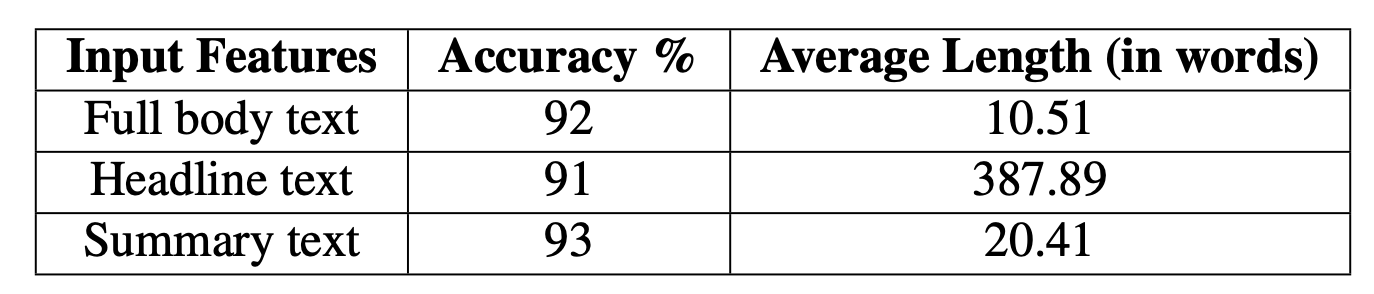

Abstract - In this work, we study abstractive text summarization by exploring different models such as LSTM-encoder-decoder with attention, pointer-generator networks, coverage mechanisms, and transformers. Upon extensive and careful hyperparameter tuning we compare the proposed architectures against each other for the abstractive text summarization task. Finally, as an extension of our work, we apply our text summarization model as a feature extractor for a fake news detection task where the news articles prior to classification will be summarized and the results are compared against the classification using only the original news text.

Keywords: Abstractive text summarization, Pointer-generator, Coverage mechanism, Transformers, Fake news detection